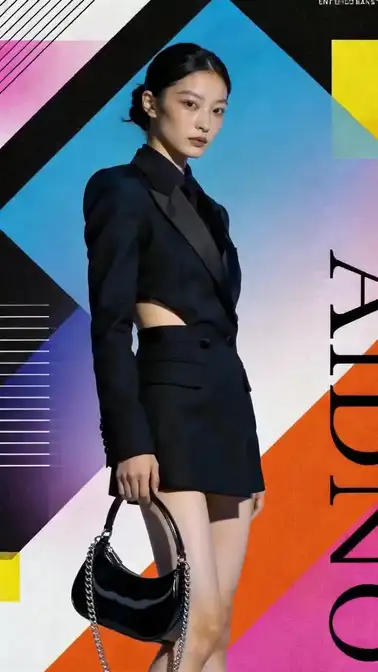

Cat & dog talk show

Accurate Voice & Sound

Cat and dog talk show segment, emotionally rich, stand-up comedy style...

Cited / reported data

Generate voice, ambience, and music together with the video output. How it works: instead of generating silent video and adding audio in post, the model produces picture and sound in the same pass. It reads the visual context — character lip movements, environment type, action intensity — and generates matching voice, ambience, sound effects, or background music. Text prompts can guide the audio style ('upbeat electronic BGM', 'soft ambient forest sounds', 'female voiceover in English'). When to use this: ad production where every variant needs localized voiceover; social-media shorts where BGM and timing matter but manual syncing is too slow; prototyping scenes where you want to evaluate picture-plus-sound together before investing in professional audio; multilingual content where the same video needs voiceovers in different languages. Tips and practical notes: for best lip-sync results, keep character faces clearly visible and unobstructed. Specify the language and tone of voice in your prompt — 'calm male narrator in Japanese' gives better results than just 'add voice.' When combining native audio with music sync, the model can handle BGM beat alignment and dialogue simultaneously. Review audio in the first pass to catch timing issues early rather than generating many variants before checking.

If a video still needs BGM, ambience, or lip-synced dialogue, the model can generate picture and sound together so those audio choices can be reviewed in the same pass.

Accurate Voice & Sound

Cat & dog talk show

Seedance 2.0 generates voice, sound effects, and music alongside video in a single pass — with lip-sync, multilingual support, and a Unilever mass-production case study.

CapabilitiesAll examples

Related guides

Guide

What Is Seedance 2.0 by ByteDance? Official Website, Release Date, Access & Hardware

Current public overview of Seedance 2.0 by ByteDance: official website, February 12 2026 release date, Dreamina access, Doubao/豆包 connection, hardware requirements, multimodal inputs, 2K / 15-second outputs, global availability, and what still depends on platform.

Open guideGuide

Seedance 2.0 Omni-Reference & Multimodal Input — Images, Video & Audio References Explained

Seedance 2.0 Omni-Reference multimodal input: up to 9 images, 3 videos, 3 audio + text. @ tag system for referencing assets. Native audio-video joint generation.

Open guideGuide

Seedance 2.0 Use Cases — Real Examples for Ads, Film, Education & More

Seedance 2.0 use cases: e-commerce ads, TVC, product demos, film previz, MV, education, real estate, and short narrative. Based on official blog and third-party case studies.

Open guideGuide

Promo videos stitched from multiple clips: workflow field notes

Honest workflow notes when a longer promo is built from several Seedance 2.0 generations: unified references, the per-clip duration cap, audio continuity, and dialogue pacing.

Open guideGuide

Seedance 2.0 Shot Design Workflow — Cinema-Grade Video Prompts

Master the 5-step shot design workflow for Seedance 2.0: from requirement analysis through visual diagnosis, six-element assembly, validation, to professional delivery. Includes 28+ director presets, three-layer lighting, and multi-segment storyboarding.

Open guideGuide

Short-Form Social Video with Seedance-Style Models — Reels, Shorts, TikTok-Class Pacing (2026)

Vertical aspect ratios, hook-first prompting, and audio loudness considerations for algorithmic feeds — third-party workflow notes.

Open guideRelated capabilities

Music Sync

Beat-synced music and rhythm alignment.

Upload music; video cuts and motion align to the beat.

Emotion Expression

Better emotional performance and expression.

Character shows joy, sadness, surprise; natural face and body language.

Video Editing

Character replacement, trimming, and additions.

Inpainting, character swap, background extend, add/remove elements; no reshoot.